Modern life, especially in the era since roughly the introduction of the CD player in the early 1980s, has conditioned us to deal with an increasingly rapid pace of technological change. One day $1000 “bag” style cell phones weighing 5 pounds are being used by highly paid professionals who think of them as status symbols. Seemingly in the blink of an eye, everyone on the planet is suddenly carrying smart phones that make bits of the original “Star Trek” series seem old fashioned.

Film cameras, once widely used after Kodak brought the box camera to the market around 1900, suddenly vanished in favor of digital photography.

Let’s get some perspective here. Phonographs (‘record players’ to some) arrived around 1875, and for the next 110 or so years were the standard for sound reproduction in most homes (tape recorders only turned up in the 1960s in any quantity). The CD appeared and lasted maybe 20 years, only to be replaced by digital media for a large segment of the population. Analog (film) photography had a similar lifespan – perhaps a century – before digital all but destroyed the medium. In both cases, the transition was sudden.

We’re used to this sort of change. Earlier generations were not. For example, archaeologists suggest it might have taken 10,000 years for humans to move from stone to early copper tools. Now, we shift our paradigms on a regular basis. It’s no wonder we’re a bit neurotic.

But why all this background material?

Planes, Trains, and Tanks

Envision the technology of the Civil War. Probably the biggest advances involved breech-loading rifles and early forays into rapid firing guns like the Gatling. Otherwise, warfare was conducted more or less as it had been for hundreds of years, with cavalry charges and men marching into battle in tight formation. Ships were still mainly wood, with early “ironclad” steam powered vessels making some of their first appearances.

Then, in a generation, it all changed. The machine gun, steel ships, larger ordnance, the automobile, telegraphy, and early radios changed the face of war.

The problem, however, was that military leaders often clung to outmoded tactics and refused to acknowledge the effect that modern technology was having on their profession. Thus we had the First World War, complete with trenches, million-man battles that achieved nothing aside from keeping burial details busy, and stagnation for years on end. Cavalry charges against fixed positions and machine guns were quickly found to be suicide missions.

Airplanes, which started off as observation platforms, emerged four years later with machine guns and bombs. Navies became focused on larger vessels, with battleships and ‘dreadnought’ class ships reaching up to 35,000 tons. The contraption that quickly became known as the ‘tank’ made its first appearance on the battlefield.

Into this mix came a number of fanciful ideas about how warfare might continue to evolve. One of these, involving a paper by Rear Admiral Bradley Fiske entitled “If Battleships Ran on Land,” introduced some very amusing and (to modern eyes) ridiculous speculation.

Fiske’s paper, which was presented to the US Naval Institute around 1912, talked about the difference between armies and ships. Fiske, a well known technical innovator with a range of significant patents already in his pocket (including things like telescopic sights on warships, a massive innovation at the time that no one else seems to have thought of) appears to have been talking in a more theoretical vein in his paper. It discusses the difference between, say, a battleship that can be steered and maneuvered by one man (the helmsman) vs. the problems of controlling an army of hundreds of thousands. He asks:

“Now can anybody imagine the entire standing army of Germany being carried along at 27 miles an hour and turned almost instantly to the right or left by one man? The standing army of Germany is supposed to be the most directable organization in the world; but could the Emperor of Germany move that army at a speed of 27 miles and hour and turn it as a whole (not its separate units) through ninety degrees in three minutes?”

Basically, Fiske was talking about the problem of command and control in the field. Note that today’s military commanders, with instant access to nearly every unit in the field using radio and satellite communications, would probably shrug and ask what the problem was. But in Fiske’s day, the problem of large unit tactics was a major issue. Guys were still galloping from one unit to another on horse to hand out movement orders to units in attack. Radios were available, but weighed a lot and were often unreliable under battlefield conditions. Infantry units still used semaphore flags to pass movement orders along.

The amusement factor comes into play when the ‘civilian’ reaction to Fiske’s paper is considered. The paper was reprinted in The Book of Modern Marvels (1917), a volume dedicated to newly discovered and emerging technologies that will be covered in future blogs, as it’s a gold mine of material from this period.

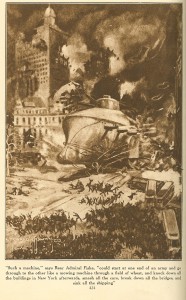

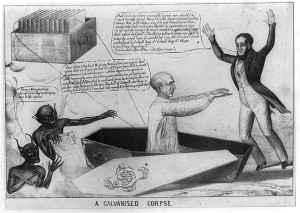

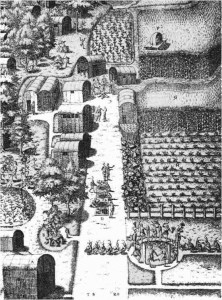

The reprint was prefaced by the fanciful illustration shown below.

This is pretty obviously not what Fiske was talking about. Clearly, some artist got hold of the “land battleship” phrase and decided to run with it. What would such a behemoth look like, anyway? One major question is how one would get it on shore in the first place. Or was it supposed to be amphibious, sailing across the ocean to emerge on its wheels to attack the hapless enemy?

As the Russians say, “it is to laugh.”

From the Ridiculous to…the Even More Ridiculous

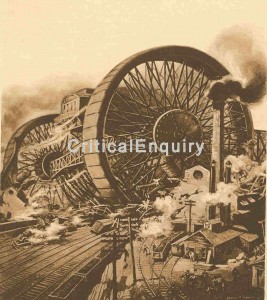

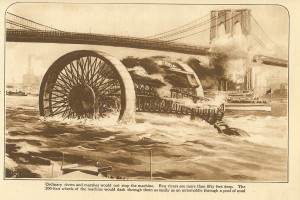

As if the above illustration wasn’t enough, an inventor named Frank Shuman (one of the fathers of solar power, incidentally) apparently either had a high fever or decided to engage in a fit of whimsy. He wrote another article, also found in the Book of Modern Marvels, entitled “The Giant Destroyer of the Future”. In it, he envisioned a monster machine with 200′ high latticework wheels and massive weights hanging beneath. He also uses the term “battleship on land” to describe the device, though whether he’d read Fiske’s article is unknown.

Shuman envisions his invention running rampantly across the enemy’s countryside, destroying towns and defenses with frightening ease. It sports a cabin with “perhaps thirty men” inside, heavily armored, suspended between the two wheels along with massive steam (!) engines to run the beast. He elucidates:

“I am fully aware the problem of obtaining engines which will give this war machine a speed of one hundred miles per hour is not easily solved. But if thousands of horsepower can be developed by the engines of pitching and rolling battleships it is not unreasonable that competent engineers can be found to design and build steam engines of twenty thousand horsepower, fed by oil-fired boilers.”

He waxes almost lyrical at the end of the article. “The commander gives a signal. The machine moves. It gains headway. Soon it travels at express-train speed. […] An enemy village, occupied by enemy soldiers lies in front. The machine speeds on toward it. It reaches them. Houses are battered down as if they are made of paper.”

Clearly, Shuman had good intentions and was definitely not a crank. Or at least he wasn’t a crank when he invented a number of other very important devices. In this case, how he thought his “land battleship” could be driven by steam engines and would be robust enough to hold up under the pounding it would receive is unknown. Maybe he was just being fanciful.

Or maybe this was an outgrowth of the massive loss of human life during the Great War — the conflict that was supposed to be “the war to end all wars.” The idea of a few soldiers mowing down vast swaths of enemy troops and infrastructure with little loss of life on their own side was surely appealing after horrors like Verdun (over 700,000 casualties), Passchendaele, the Somme, and the disastrous Gallipoli (over 500,000 casualties) landings. In 1917, the US was just entering the war and hadn’t experienced the huge number of war dead suffered by the other powers.

Fiske and Shuman also could not have foreseen the subsequent rise of air power and its impact on warfare, even a decade later. In 1921, Billy Mitchell demonstrated that bombers could sink even the largest warships with his demonstration against several German ships seized at the end of the war. No one listened. In 1938, a few B17 bombers made the news by intercepting the Italian liner Rex while she was still 620 miles off New York. This had the sole effect of infuriating our Navy, which thought of the oceans as its private hunting ground and promptly lobbied to have the Army Air Corps limited to operational flights of under 100 miles from shore.

It wasn’t until World War II, which saw the sinking of a number of unescorted battleships and other large vessels by enemy aircraft, when the era of the battleship came to an end. The Land Battleship was obsolete before it could ever be built, or even seriously considered.

Would it have worked? It’s pretty doubtful, and the advent of anti-tank guns as well as larger bombers would likely have made short work of the Land Battleship, as was found with the seagoing version. It would have been interesting as a terror weapon, but probably little more. Like the bag phone, film camera, and tape recorder, the battleship in all its forms (with apologies to the USS Missouri) went into the dustbin of technology.

But it would have kicked serious ass at a “monster truck” competition.